Human beings notice the earth by means of a blend of diverse modalities, like vision, hearing, and our knowing of language. Machines, on the other hand, interpret the entire world via details that algorithms can procedure.

So, when a device “sees” a photograph, it should encode that picture into data it can use to perform a task like graphic classification. This course of action turns into a lot more sophisticated when inputs come in several formats, like movies, audio clips, and photographs.

“The key obstacle here is, how can a equipment align people different modalities? As people, this is simple for us. We see a auto and then listen to the sound of a motor vehicle driving by, and we know these are the similar matter. But for equipment understanding, it is not that clear-cut,” suggests Alexander Liu, a graduate pupil in the Laptop or computer Science and Synthetic Intelligence Laboratory (CSAIL) and 1st author of a paper tackling this challenge.

Liu and his collaborators produced an synthetic intelligence procedure that learns to symbolize info in a way that captures ideas which are shared concerning visual and audio modalities. For instance, their technique can discover that the action of a newborn crying in a online video is associated to the spoken word “crying” in an audio clip.

Utilizing this expertise, their machine-understanding product can identify where a specified action is having position in a online video and label it.

It performs better than other device-finding out approaches at cross-modal retrieval jobs, which require getting a piece of info, like a video clip, that matches a user’s query supplied in a further kind, like spoken language. Their model also would make it less complicated for customers to see why the equipment thinks the video clip it retrieved matches their query.

This technique could someday be utilized to assist robots find out about principles in the planet through perception, more like the way human beings do.

Signing up for Liu on the paper are CSAIL postdoc SouYoung Jin grad learners Cheng-I Jeff Lai and Andrew Rouditchenko Aude Oliva, senior investigate scientist in CSAIL and MIT director of the MIT-IBM Watson AI Lab and senior author James Glass, senior study scientist and head of the Spoken Language Devices Group in CSAIL. The analysis will be presented at the Once-a-year Assembly of the Affiliation for Computational Linguistics.

Learning representations

The researchers concentrate their function on illustration discovering, which is a type of equipment studying that seeks to renovate input knowledge to make it simpler to carry out a job like classification or prediction.

The representation mastering model takes uncooked information, these kinds of as movies and their corresponding textual content captions, and encodes them by extracting options, or observations about objects and actions in the video clip. Then it maps people information factors in a grid, known as an embedding area. The design clusters comparable facts jointly as single points in the grid. Each and every of these facts points, or vectors, is represented by an person word.

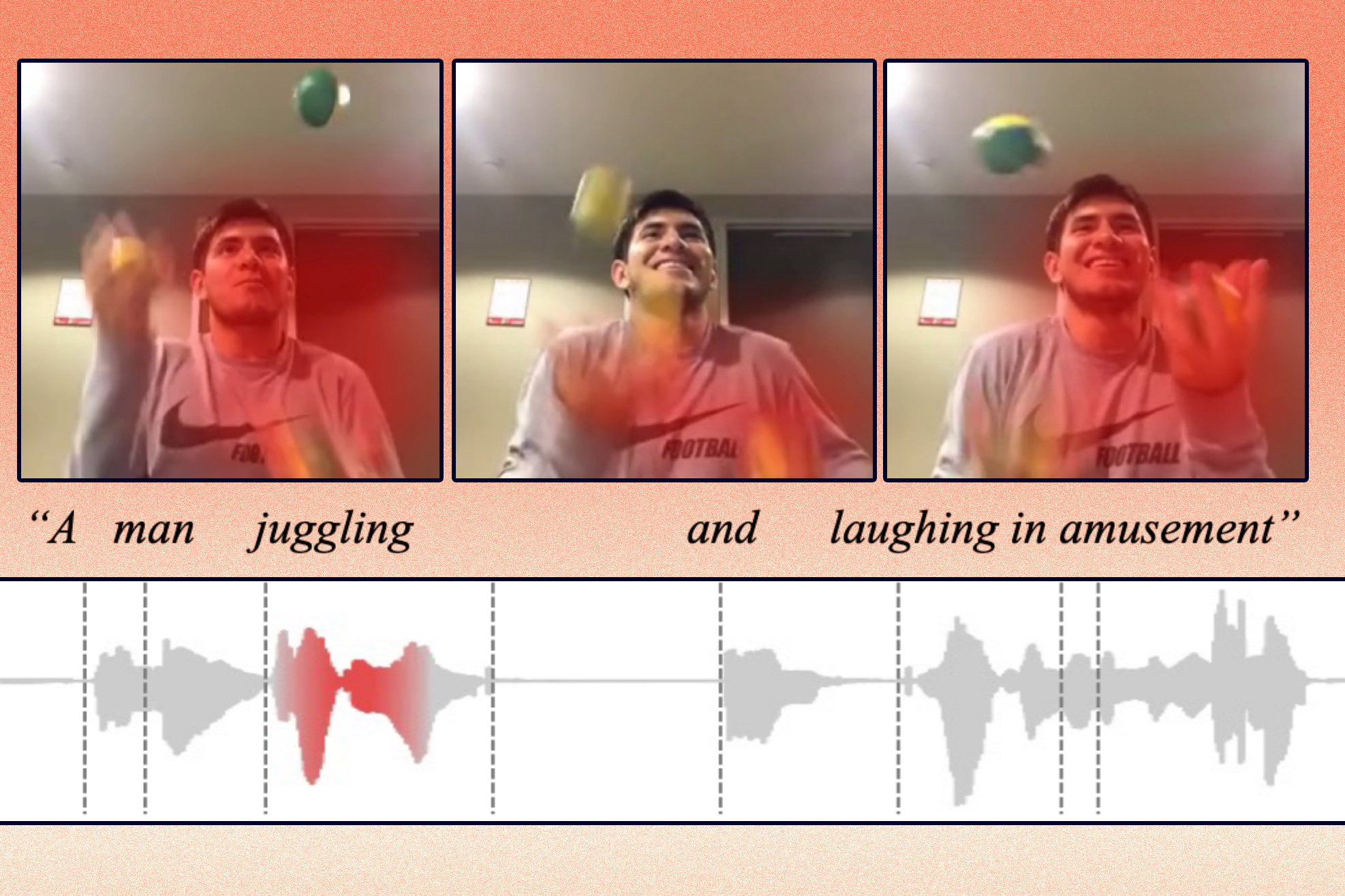

For occasion, a online video clip of a individual juggling could possibly be mapped to a vector labeled “juggling.”

The scientists constrain the model so it can only use 1,000 words and phrases to label vectors. The product can come to a decision which actions or concepts it needs to encode into a one vector, but it can only use 1,000 vectors. The product chooses the terms it thinks most effective depict the data.

Alternatively than encoding data from distinctive modalities on to independent grids, their method employs a shared embedding house exactly where two modalities can be encoded jointly. This allows the design to discover the partnership between representations from two modalities, like video clip that displays a person juggling and an audio recording of an individual declaring “juggling.”

To support the program approach information from various modalities, they designed an algorithm that guides the equipment to encode similar principles into the similar vector.

“If there is a video about pigs, the model might assign the word ‘pig’ to a person of the 1,000 vectors. Then if the product hears an individual expressing the word ‘pig’ in an audio clip, it should nonetheless use the very same vector to encode that,” Liu describes.

A far better retriever

They tested the model on cross-modal retrieval tasks applying a few datasets: a video clip-text dataset with movie clips and text captions, a online video-audio dataset with video clips and spoken audio captions, and an impression-audio dataset with illustrations or photos and spoken audio captions.

For illustration, in the video-audio dataset, the design selected 1,000 words and phrases to signify the actions in the films. Then, when the researchers fed it audio queries, the product tried using to uncover the clip that very best matched those spoken terms.

“Just like a Google lookup, you type in some textual content and the device attempts to explain to you the most appropriate factors you are exploring for. Only we do this in the vector space,” Liu says.

Not only was their system more most likely to locate far better matches than the products they compared it to, it is also less complicated to understand.

Mainly because the product could only use 1,000 complete terms to label vectors, a consumer can additional see conveniently which terms the device employed to conclude that the movie and spoken words and phrases are similar. This could make the design a lot easier to implement in real-environment conditions in which it is very important that people understand how it would make choices, Liu claims.

The product even now has some constraints they hope to deal with in long run work. For just one, their exploration focused on information from two modalities at a time, but in the serious environment people experience several facts modalities concurrently, Liu states.

“And we know 1,000 words performs on this type of dataset, but we really don’t know if it can be generalized to a genuine-planet dilemma,” he adds.

In addition, the photographs and video clips in their datasets contained very simple objects or simple actions true-planet facts are significantly messier. They also want to ascertain how perfectly their method scales up when there is a broader diversity of inputs.

This investigate was supported, in section, by the MIT-IBM Watson AI Lab and its member providers, Nexplore and Woodside, and by the MIT Lincoln Laboratory.